The old agricultural industry is looking into new technologies that can help farmers “make smarter decisions on the field and do more with less” according to McKinsey Company, which also includes intelligent solutions, as we enter the twenty-first century. Solutions for automatic farming will boost output, reduce labor shortages, increase safety, and maximize equipment utilization. However, the farm is frequently a hostile setting. Dust presents unique challenges for autonomous systems, making it very challenging toc”see” and guide a vehicle or piece of “intelligent” equipment. This goes beyond the typical adverse conditions that today’s equipment must deal with, such as mud, wind, rain, and fog.

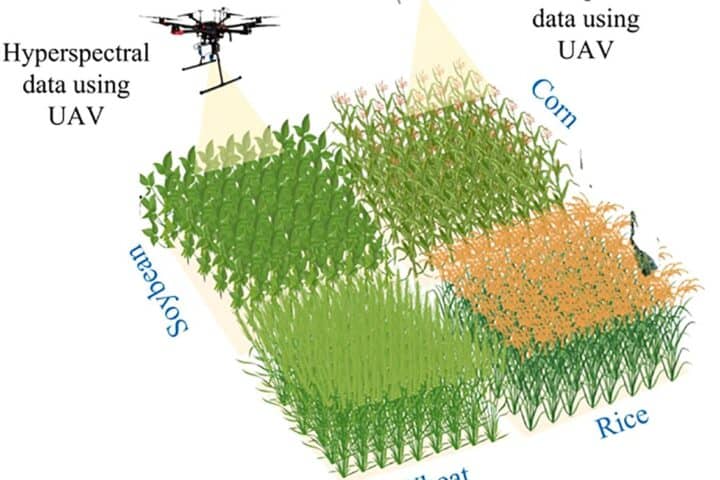

Harvesting crops and cultivating soil is a sandy endeavor. Additionally, climate scientists think we may see an ever-widening swath of dustier farmlands as temperatures rise and rainfall falls. According to NASA scientist Benjamin Cook,” If we continue to experience increases in aridity and drought, as we anticipate with climate change, we’ll begin to observe additional rises in dust loads, and in the atmosphere.” Autonomous systems need sensors that can gather precise 3D images of their surroundings, even in low visibility, in order to combat filthy farming environments.

Dust is made up of good, non-translucent particles that, when blown from the earth, create a thick cloud that is especially challenging to see through. Although visibility is reduced by precipitation like snow, rain, or fog, navigation by human or vision-based systems is also possible in all but the most extreme circumstances. To create an accurate 3D model of the objects hidden behind the dust, but, a particularly high-resolution and robust sensor is required.

Stereo vision with a 3D camera has been shown to perform well in this demanding environment. Due to their precise calibration, high resolution, multiple perspectives, and wide baseline ( the distance between the cameras ), high-resolution advanced stereo vision sensors have shown an exceptional ability to” see” through dust in agricultural settings.

The most recent sound vision technology provides excellent, accurate 3D images of a scene in actual time. The cameras can be “untethered,” or isolated and mounted freely, thanks to calibration software that runs on a GPU. The two camera images are aligned and compared at a speed of 7 to 20 frames per second, producing depth measurements of 35 million pixels. This new generation of stereo vision sensors can” see” through dust thanks to their unmatched data density. Any light photons that pass through the dust cloud are picked up by the sensors and used to determine the depth of the structures from which they originated with a frame rate of 5 million pixels per frame.

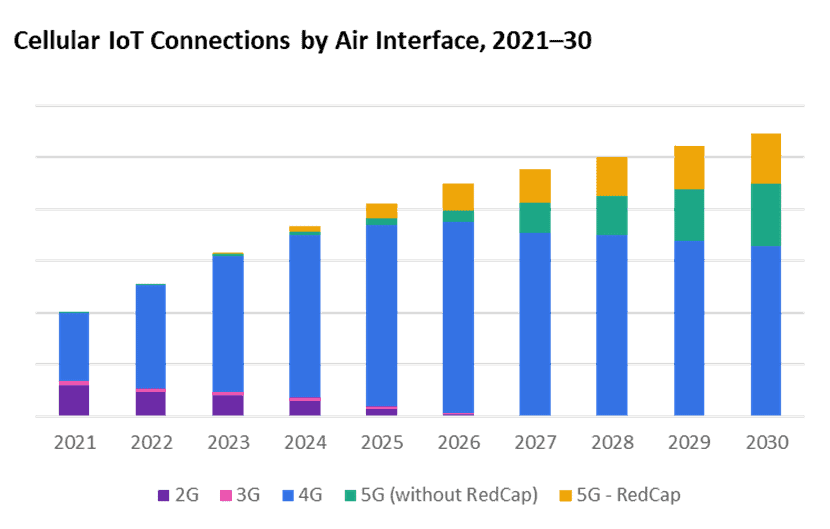

Lidar ( light detection and ranging ) is the most common rival technology for range. Lasers are used to emit light from lasers, which bounce off nearby objects before returning to a sensor inside the lidar. Lidars calculate the distance to the object from which the photon bounced and the time it takes for those photos to return. Lidar is precise, but it depends on the photons passing safely through the dust cloud and back through it without being obstructed by dust particles in either direction. Also, depending on the lidar technology, advanced radio vision, which requires 35 million points per minute to work with, has just 600, 000 to 6 million point ranges. Therefore, lidar is considerably less effective at measuring distance in sandy environments because there are 6x to 60x fewer points to work with and because each photon depends on a successful round trip.

Head-to-head comparison of cutting-edge sound vision sensors and lidar

In order to compare our cutting-edge radio vision platform to lidar sensors under different dust conditions, NODAR just ran performance tests. Two 5. 4 megapixel Sony IMX490 cameras with a 6 millimeter, 70-degree field of view lenses and an 110-cm baseline were used for the tests. An Ouster digital lidar sensor ( OS1- 128 ) with a 128 channel resolution was used in the lidAR test configuration.

Concrete was blown into the air to simulate three different types of dust: fixed tillage, driving in an off-road environment, and viewing a static target. The test used the number of valid-range data points each sensor technology returned to compare the two, showing how well they could” see” obstacles hidden behind the dust cloud.

The outcomes: cameras outperform lidar

Fixed target:  . When the environment was dust-free, both systems returned 100 % appropriate data points. However, the 3D cameras significantly outperformed lidar at all density levels when dust was blown through an open area to obscure a little cart, successfully delivering ample range measurements through light, modest, and heavy clouds of dust when lidAR could not:

- Nearly 90% of valid data points were returned by 3D cameras in light dust, while lidar leveled off at 40%

- More than 50% of valid data points were returned by 3D cameras in moderate dust, while lidar decreased to less than 20%.

- When compared to lidar, which produced less than 10% and nbsp, 3D cameras returned more than 20% of valid data points in heavy dust.

Dynamic off-road driving: A commercial-grade tractor put the two sensor systems to the test by taking a high-vibration detour. The camera-based sensor offered longer range and higher data densities than the lidar system, and it offered measurements that were the most reliable when compared to the results of the lipar test.

Stationary tillage: Tillage, Test ( Tillage is the process of turning over soil to prepare it for planting ): Cameras improved visibility in mild and moderate dust. In this setting, the camera-based sensor even offered higher density and longer-range measurements.

The three victories

Today, automatic farming is fostering a positive outlook in the agricultural sector. Greater agricultural productivity and profits, improved farm safety, and advancements toward economic sustainability goals are just a few of the benefits that farm automation can offer, according to McKinsey. Automated equipment must be ready to function in challenging farming environments in order to produce these results. Sandy conditions are the most challenging of these environments. Making the smartest technological decisions may be more crucial than previously in this brave new world. In the end, just cutting-edge sound vision sensors can see through dust clouds clearly enough for automated equipment to function efficiently and properly today.